What is AI Extinction Risk?

"

Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.

–2023 CAIS Statement on AI Risk, signed by hundreds of top AI experts, including:

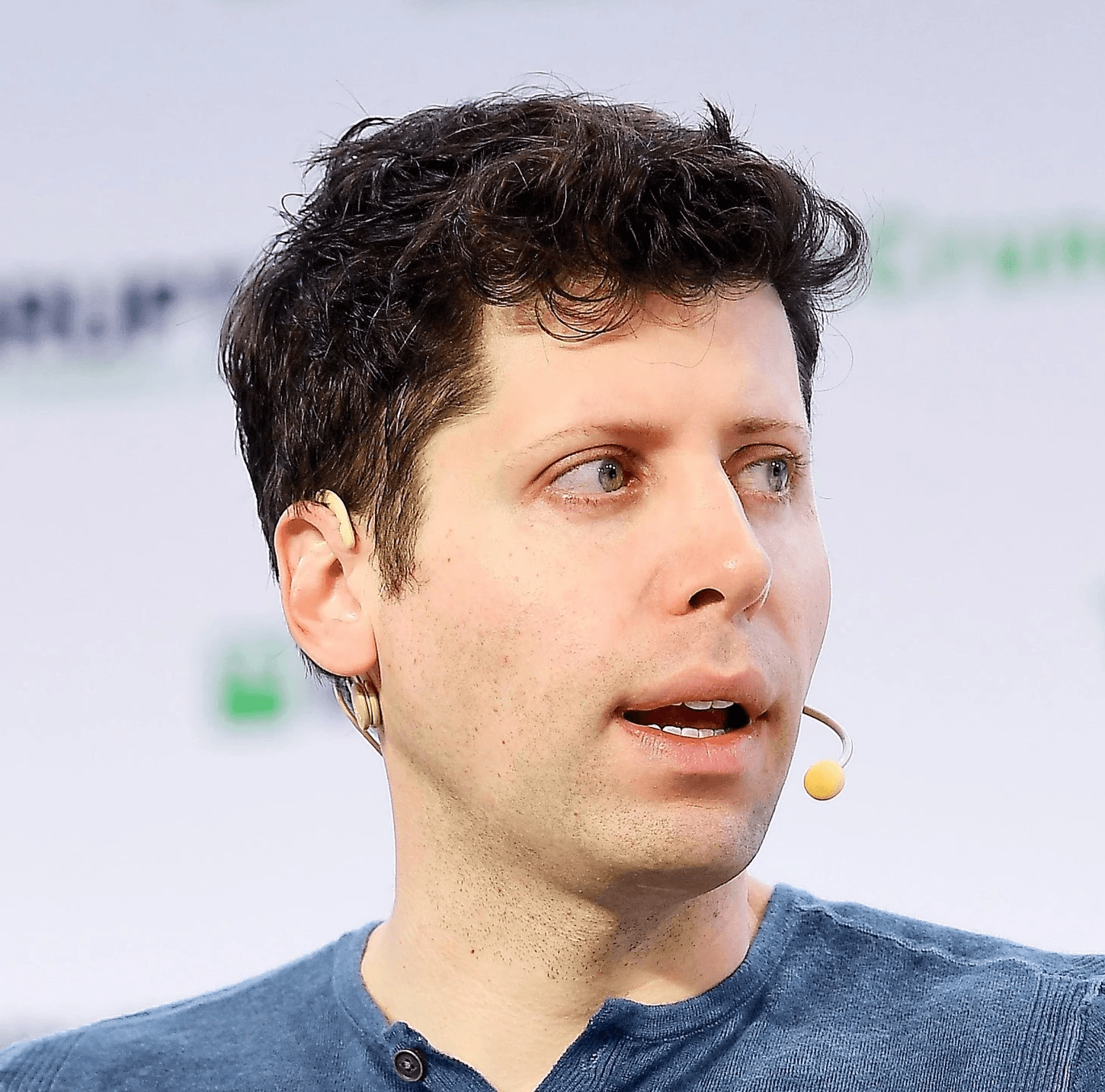

Sam Altman

CEO of OpenAI

(ChatGPT)

Demis Hassabis

Nobel Prize winner

CEO of Google DeepMind

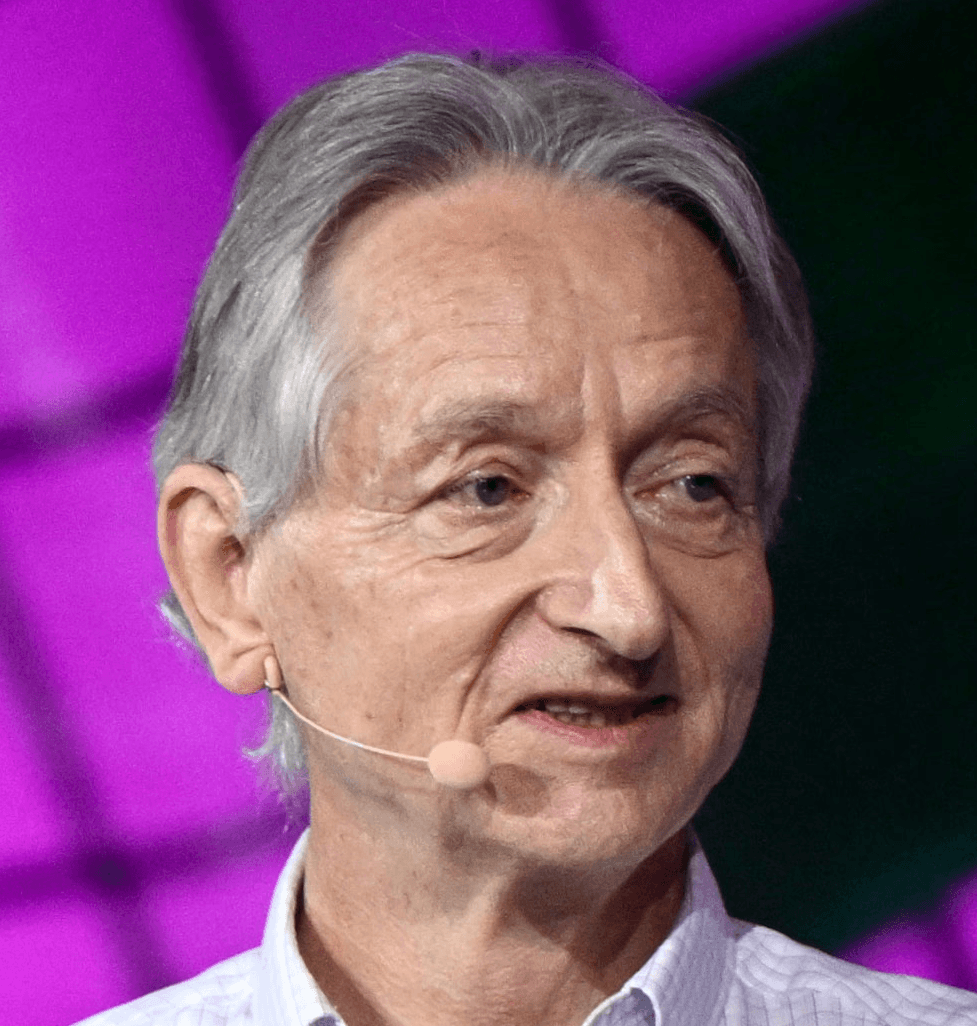

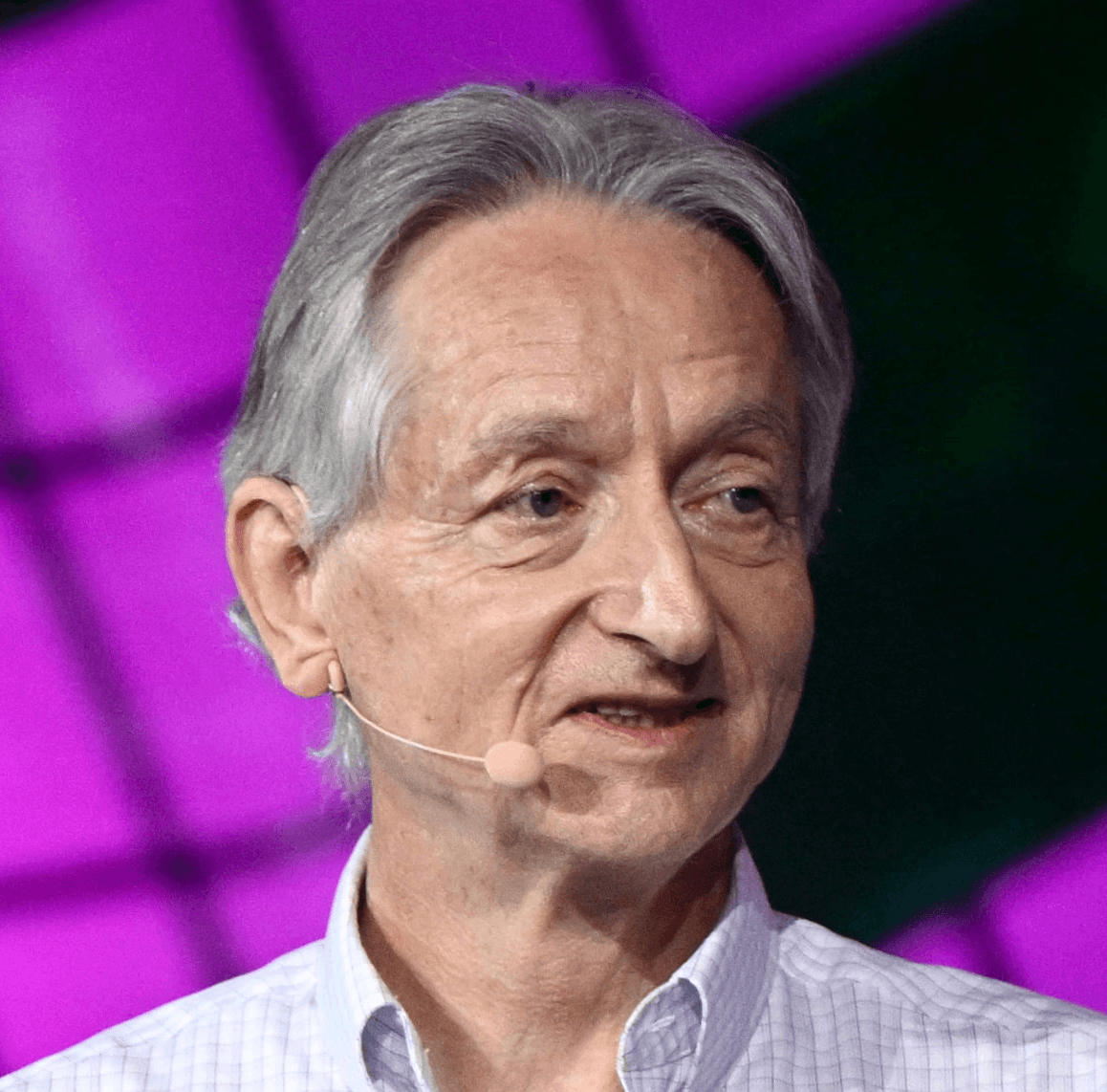

Geoffrey Hinton

Nobel Prize winner

"Godfather of AI"

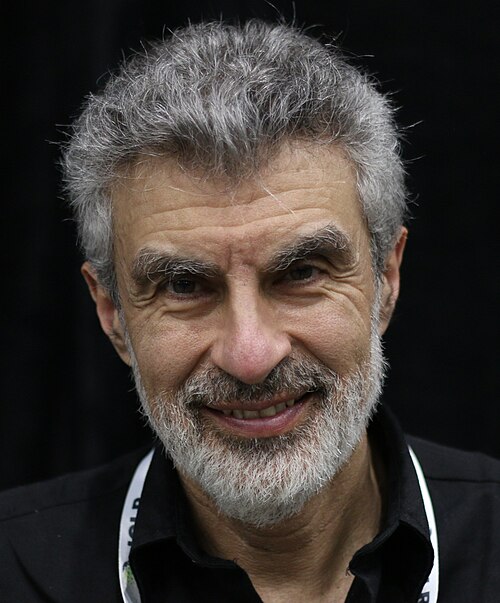

Yoshua Bengio

World's most-cited scientist

"Godfather of AI"

Bill Gates

Founder of Microsoft

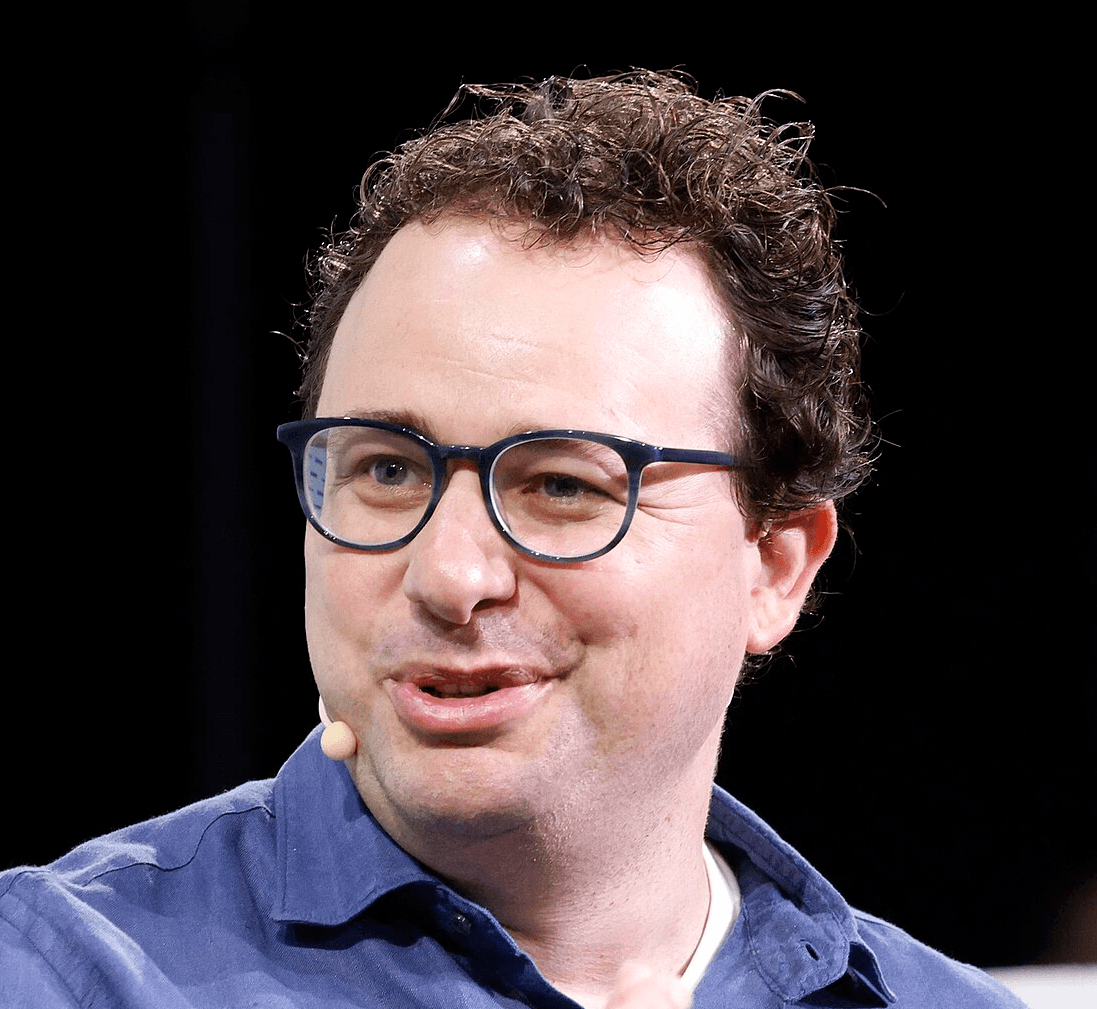

Dario Amodei

CEO of Anthropic

(Claude)

Why can't we control AI?

AI systems aren't programmed like traditional software. They're grown, more like an organism.

There is no reliable way to prevent dangerous behavior in AI or to set its goals precisely.

AIs already lie, blackmail, and even attempt to kill in testing scenarios to avoid being shut down. This isn't a bug an engineer can fix. No one at the AI companies knows how to stop this.

Right now, AI systems aren't powerful or autonomous enough for this to cause catastrophic harm. But as they grow more capable, the danger scales with them.

How much time do we have?

Before ChatGPT launched in 2022, most experts saw superintelligent AI as a distant problem for future generations. That has changed.

Due to the accelerating pace of AI progress, many experts now believe superintelligent AI could arrive within just a few years unless we act soon.

Yet there is a large gap between how experts see this threat and how much the public knows about it. There has been little broad public discussion, and most politicians are neither aware of nor preparing for the consequences.

What can we do to prevent this risk?

As a first step, we must prohibit the development of superintelligent AI.

AI progress is accelerating. Companies are racing with each other, and countries are increasingly involved. This race assumes there can be a human "winner", but since no one can control superintelligent AIs, whoever "wins" simply hands control to the AI itself.

To avoid this future and keep humanity in control, we must restrict the key capabilities that make superintelligent AI possible.

A great future for humanity is possible, and you can help turn the tide.

You can take action in less than 2 minutes, and you can read our full plan here.